In the previous blog post, we discussed UBot Studio’s new logging commands. I’d now like to explain further implications of the new logging commands, especially the power they give you to automatically test your code.

Writing automated tests is a practice used by the best coders in the world. But even if you’re not a coder at all, tests will make your bots able to withstand anything the internet throws at them. Automated testing is one of the single best practices you can do for keeping bots from breaking, especially when those bots are very large. There are two types of automated tests that we will look at: Unit Tests, and Flow Tests.

The Art of Unit Testing

Another important implication of the changes is that it is now very simple to write unit tests. What is a unit test, you ask? Only one of the most important ideas to come from computer science in the last 20 years or so.

A unit test is a short piece of code that has no purpose other than to ensure some other piece of code is working correctly. By putting a robust suite of unit tests into your code, you can always rest assured that your bot will be as stable and bug-free as possible. This is especially useful for those of us who have created large, complex bots. Trying to make a change in complex code can cause a ripple effect, where one change affects some other bit of code down the line, causing headaches every step of the way. But if you have a good test suite in place, you’ll know right away if something is ever out of place, and you can fix it before it becomes a big mess.

It’s a good idea to keep unit tests out of the main script. The best way to do this is to create a separate tab. Set it to invisible if you need to compile.

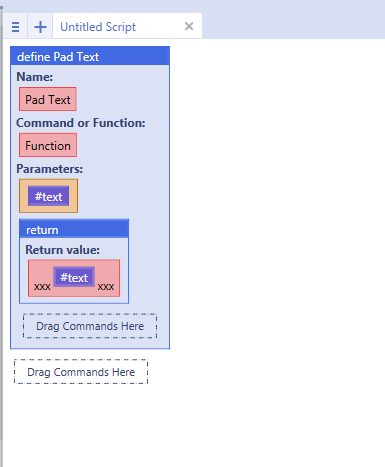

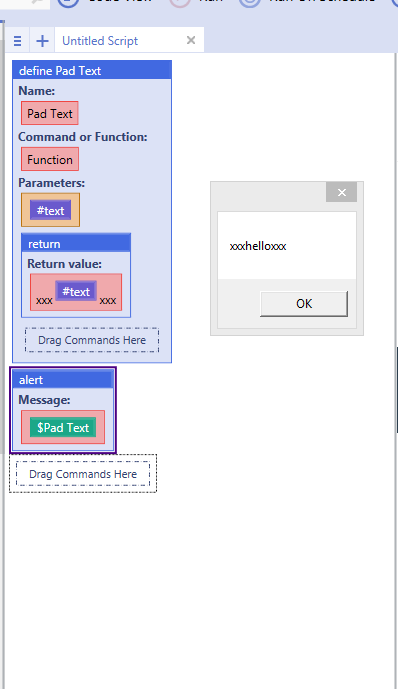

Let’s look at what unit testing looks like in UBot Studio. Say we have a simple function:

Using this function might look like this:

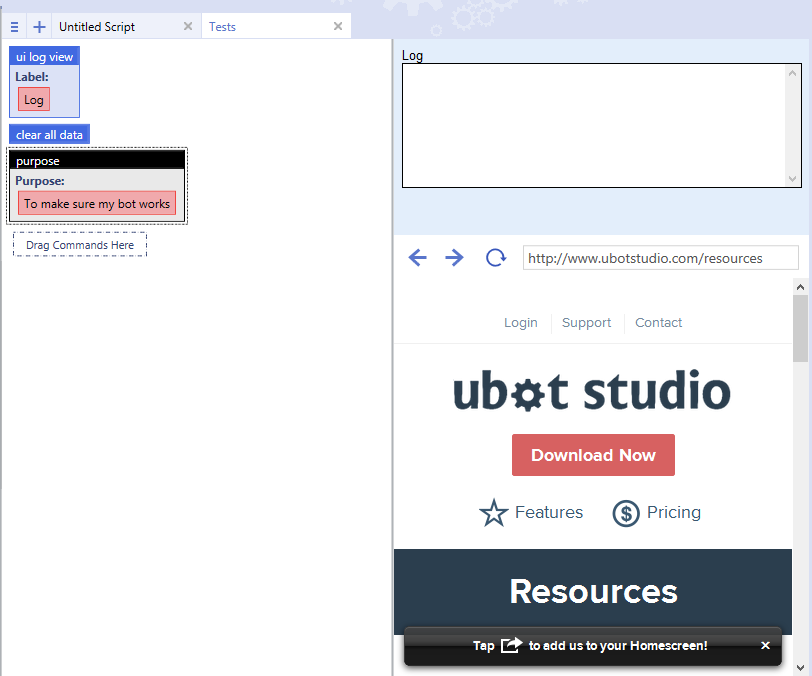

In the course of making a very large bot, it might be that I need to change this function at some point. I want to make sure it will always work as I expect, so I’ll write a test. I’ll start by making a new tab for my tests:

We’ll start with some basic boilerplate code to make sure our log shows like we want. We’ll use the clear all data command to make sure we start fresh each time we run our tests. We’ll also set a purpose. This isn’t strictly necessary, but it gives our tests a sense of completeness. Make sure the purpose command comes after the clear all data command, since the log itself is stored in a variable.

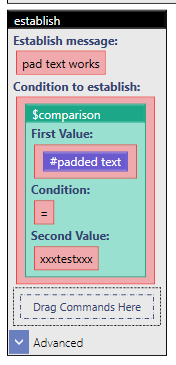

Great! we’re ready to test. We’ll drag an establish. For a unit test, we want it to fail by default, run the child commands, and then pass. To do this, we’ll set a variable. We’ll test for the variable to look like what our padded text function makes text look like.

On its own, we know this test will fail, because we haven’t actually set the variable. Let’s see what happens if we run it.

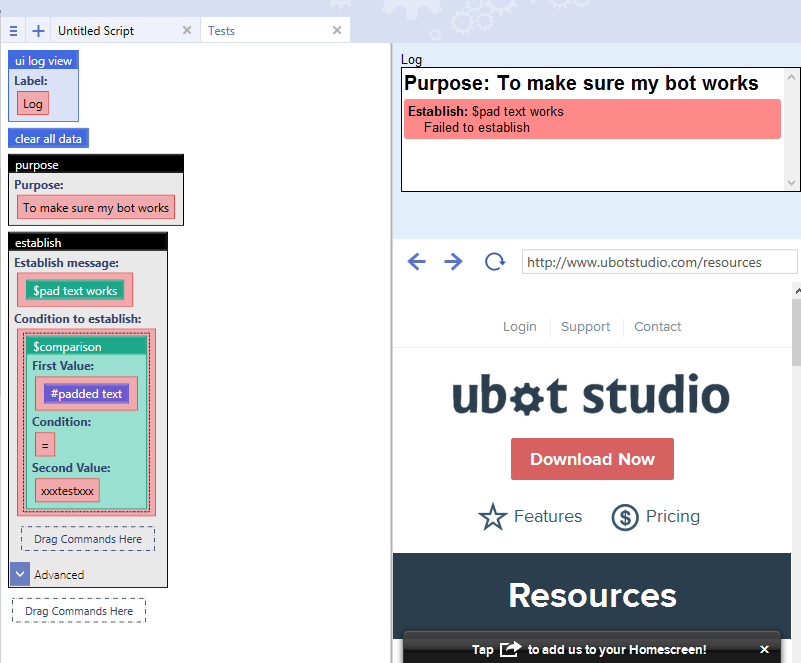

Oh no! Our test failed! Let’s fix that. We’ll add a set command to make a variable that satisfies the starting condition.

We give it a run and…

Hurray!

Let’s add some more defines to our script and see if we can keep it clean.

By now our unit tests are looking a little cluttered.

Ok, maybe 4 isn’t bad, but let’s pretend it’s more like 40. We can keep our life much more organized if we put our tests in different sections. You’ll have to make a decision on how tests should be grouped. In this example, I’m going to group them by tests for commands vs tests for functions.

That feels more organized. Let’s give it a run and look at our results.

Uh Oh! I’m seeing red! Let’s look at what broke and why. On closer inspection, I realize that I forgot the www and a trailing backslash in all my urls. I’ve gone through and fixed them all. Let’s try again.

A little slice of nerd satisfaction is a test report with lots of green. Also take note of how the sections affect the organization of the log. This is a solid, if simple, test suite if ever there was one.

To download this bot, click here.

Seth